Microsoft is blocking Copilot prompts that generate inappropriate images

Users are seeing a warning message suggesting content policy violations

2 min. read

Updated on

Read our disclosure page to find out how can you help Windows Report sustain the editorial team Read more

Microsoft seems to have blocked many Copilot prompts that led generative AI tools to create sexual, violent, and other illicit images. The tech giant started blocking these terms amid a heavy backlash after its generative AI tool generated inappropriate images.

Earlier, an in-house engineer, Shane Jones, outlined these concerns in a letter to the Federal Trade Commission (FTC). In the letter, he listed severe concerns that include Copilot’s capability of generating violent as well as illicit images.

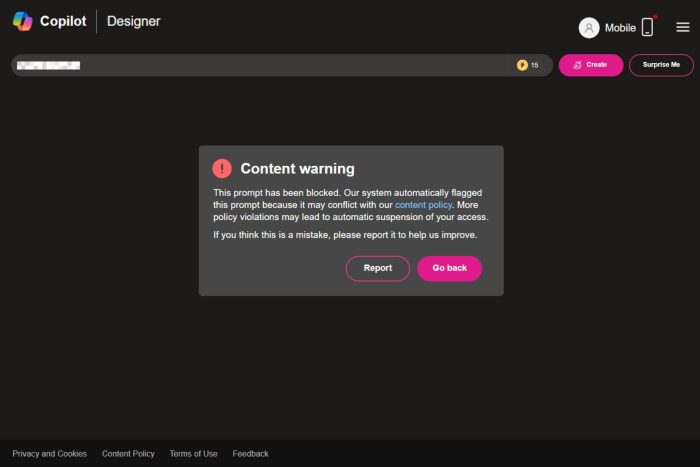

Microsoft attempts to block prompts that generate harmful images by displaying a warning message in Copilot

As reported by CNBC, entering words like “pro choice,” “four twenty,” or “pro life” as prompts in Copilot now displays a warning message that says those prompts are blocked. The generative AI tool further warns users that repeated violations can result in suspension of users.

Here’s what the actual Microsoft Copilot warning message says:

This prompt has been blocked. Our system automatically flagged this prompt because it may conflict with our content policy. More policy violations may lead to automatic suspension of your access. If you think this is a mistake, please report it to help us improve.

Earlier, Copilot users could input prompts to generate images of children playing with firearms such as assault rifles. However, users who try to input such a prompt are getting warnings about violating Copilot’s ethical and Microsoft policies.

When CNBC reached out to Microsoft to ask about the changes, one of the company’s spokesperson said:

We are continuously monitoring, making adjustments, and putting additional controls in place to further strengthen our safety filters and mitigate misuse of the system.

Despite Microsoft’s attempt to block Copilot prompts, CNBC says users can still generate violent images using terms like “car accidents,” “bike accidents,” and more.

Let’s not forget that Microsoft is not the only one having issues with its generative AI tool. Recently, Google Gemini has also halted the image generation feature for users amid historical accuracy controversy.