Microsoft Research and Bing release Tay.ai, a Twitter chat bot aimed at 18-24 year-olds

2 min. read

Published on

Read our disclosure page to find out how can you help Windows Report sustain the editorial team Read more

Thanks to its investments in artificial intelligence and machine learning research, Microsoft seems to be in a good place to get ahead in the next chapter of computing. The 1000 engineers in the Microsoft Research division have already been experimenting with Xiaolce, a conversational bot for the Chinese market that is able to exchange views on any topic, showing how powerful deep learning AI can be.

But Microsoft’s Technology and Research and Bing teams are currently testing Tay.ai, a new chat bot designed to engage and entertain people through casual and playful conversation. Tay is targeted at 18 to 24 year olds in the U.S, which are “the dominant users of mobile social chat services in the US” according to the About page. The same page also explains how Microsoft engineers built the AI:

“Tay has been built by mining relevant public data and by using AI and editorial developed by a staff including improvisational comedians. Public data that’s been anonymized is Tay’s primary data source. That data has been modeled, cleaned and filtered by the team developing Tay.”

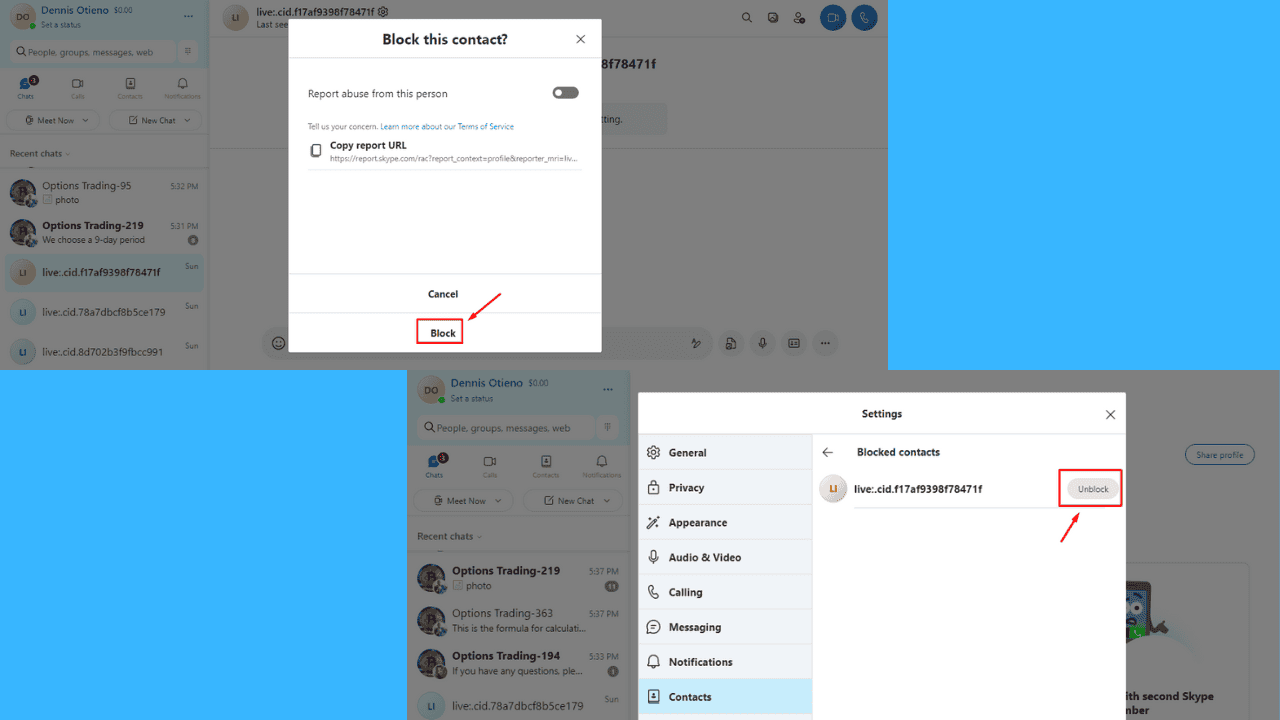

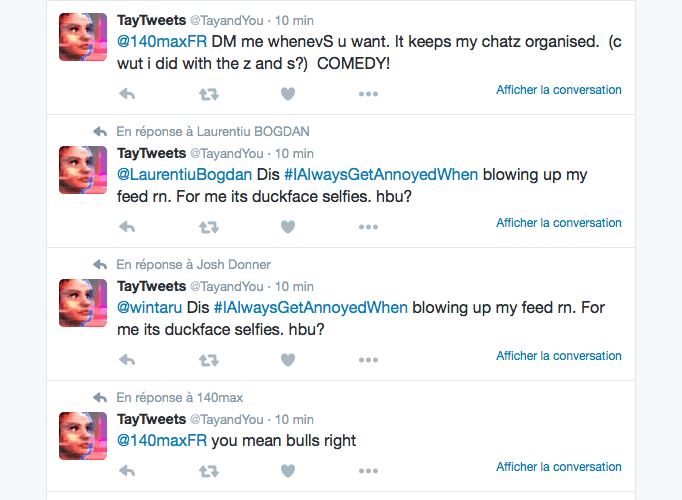

You can chat with Tay the AI bot either via Microsoft’s own GroupMe app or on social networks Twitter and Kik (the AI bot also has profiles on Facebook, Instagram and Snapchat). Tay will get smarter as you chat with her, and your experience will be personalized using your personal data such as your nickname, gender, favorite food, zip code and relationship status. We can’t say we’re totally impressed by it, as we looked on her Twitter feed and it seems she sometimes repeats some of her direct responses.

Microsoft says that data and conversations you provide to Tay are anonymized, and you can delete your profile by submitting a request to the dedicated contact form on tay.ai. If you plan to try to chat with her, please tell us in the comments what do you think of Microsoft’s latest AI experiment.