Microsoft provides AI governance principles following ChatGPT popularity

3 min. read

Published on

Read our disclosure page to find out how can you help Windows Report sustain the editorial team. Read more

A day removed from Microsoft’s developer conference where it plastered its artificially intelligent Copilot platform across almost all facets of its business portfolio, company vice chair and president Brad Smith talks about how to govern AI going forward.

Starting off his latest Microsoft On the Issues post, Smith posits, “Don’t ask what computers can do, ask what they should do.”

Smith’s statement opens his discussion on what he and Microsoft believe are the best ways to interact with this new resurgence of artificially intelligent-led platforms and services, namely, the best way to make sure AI doesn’t spiral out of control like previously lauded technologies such as social media.

As part of constructing guardrails for the use of AI in the future, Smith and company focus on the accountability aspect of their six adopted ethical principles for AI, which he sums up as, “we must always ensure that AI remains under human control.”

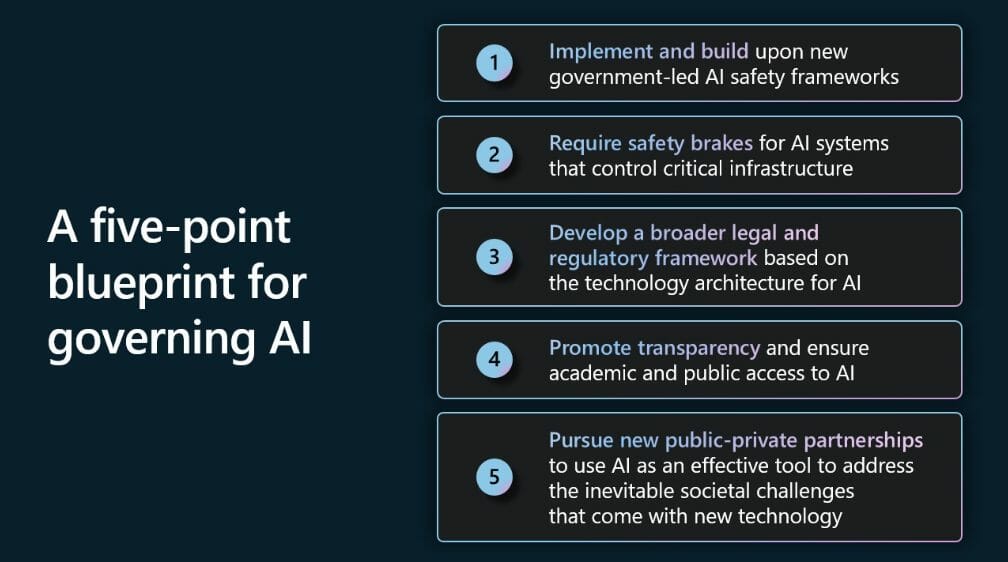

To maintain “human control”, Smith offers the following Five-point blueprint for Governing AI that includes, implementing new government-led AI safety frameworks, requiring safety brakes for AI systems, developing broad legal and regulatory frameworks, promoting transparency and access to AI, and the pursuit of public-private partnerships to address societal changes.

Details of the blueprint include, a need to develop new laws and regulations for “highly capable” AI systems, and Smith suggest these be implemented by a new governing agency that understands and traffics in the knowledge of licensing AI models and AI infrastructures.

Another standout details in Smith’s blueprint plan are calls for “safety brakes” for AI systems tasked with operating critical infrastructure such as electrical grids, water systems, city traffic, and domestic travel among others. Following this point, governments would require operators to build in and regularly test the thresholds of these systems that would be siloed in licensed AI datacenters for an added layer of protection.

Perhaps the most consequential point of the plan is the ambitious plan to avoid the quicksand-like mistakes of social media by using AI to protect democracy and rights of people by providing broad access to AI skills. While most people have access to social media as platform, AI presents an opportunity for most people (with adequate AI skills) to create their own platforms to create technologies that affect sustainability, protections, and democratized economies of scale.

Smith goes on to highlight a few more key points of he and Microsoft’s blueprint to governing AI, and I suggest you take a read if you’re interested in the high-minded and nuanced future of all of the technologies the company showcased at BUILD 2023 this week.

User forum

0 messages