Microsoft is developing a new tool to mitigate AI hallucinations

There's no word on when it will be released, though

3 min. read

Published on

Read our disclosure page to find out how can you help Windows Report sustain the editorial team. Read more

Microsoft is working on a new AI tool to help block and rewrite underground information for better responses. And, Microsoft is doing so to mitigate AI hallucinations. As you may know, Copilot was initially called Bing Chat when Microsoft launched in early 2023.

Although the AI tool got an impressive response, there were several reports about it generating weird answers and responses to the users’ queries, often labeled as AI hallucinations.

When Microsoft became familiar with these errors, the company introduced limits on the chat sessions per day. By doing so, Microsoft wanted to reduce the amount of errors and strange responses users were getting when using the Copilot.

Microsoft is working on a new tool to block and rewrite ungrounded info to prevent AI hallucinations

Even though the Redmond giant got rid of most of those chat turn limits, Copilot hallucinations were still a thing and bothering users as well as Microsoft at large. However, recently, Microsoft took to its Source blog to detail how such errors are made with GenAI (generative AI). In addition, the company also talked about how it is working on mitigating such issues.

According to Microsoft, hallucinations occur when AI answers using undergrounded content. That means, for some reason, the AI models either modify or add data that were fed into its model.

However, it can be a good thing since answers can be more creative with the help of Copilot and ChatGPT. That said, businesses will still need AI models to use grounded data to get factually accurate answers.

In a recent blog post, Microsoft added that it is developing tools that can help AI models stick with grounded data to mitigate AI hallucinations. The company says:

Company engineers spent months grounding Copilot’s model with Bing search data through retrieval augmented generation, a technique that adds extra knowledge to a model without having to retrain it. Bing’s answers, index, and ranking data help Copilot deliver more accurate and relevant responses, along with citations that allow users to look up and verify information.

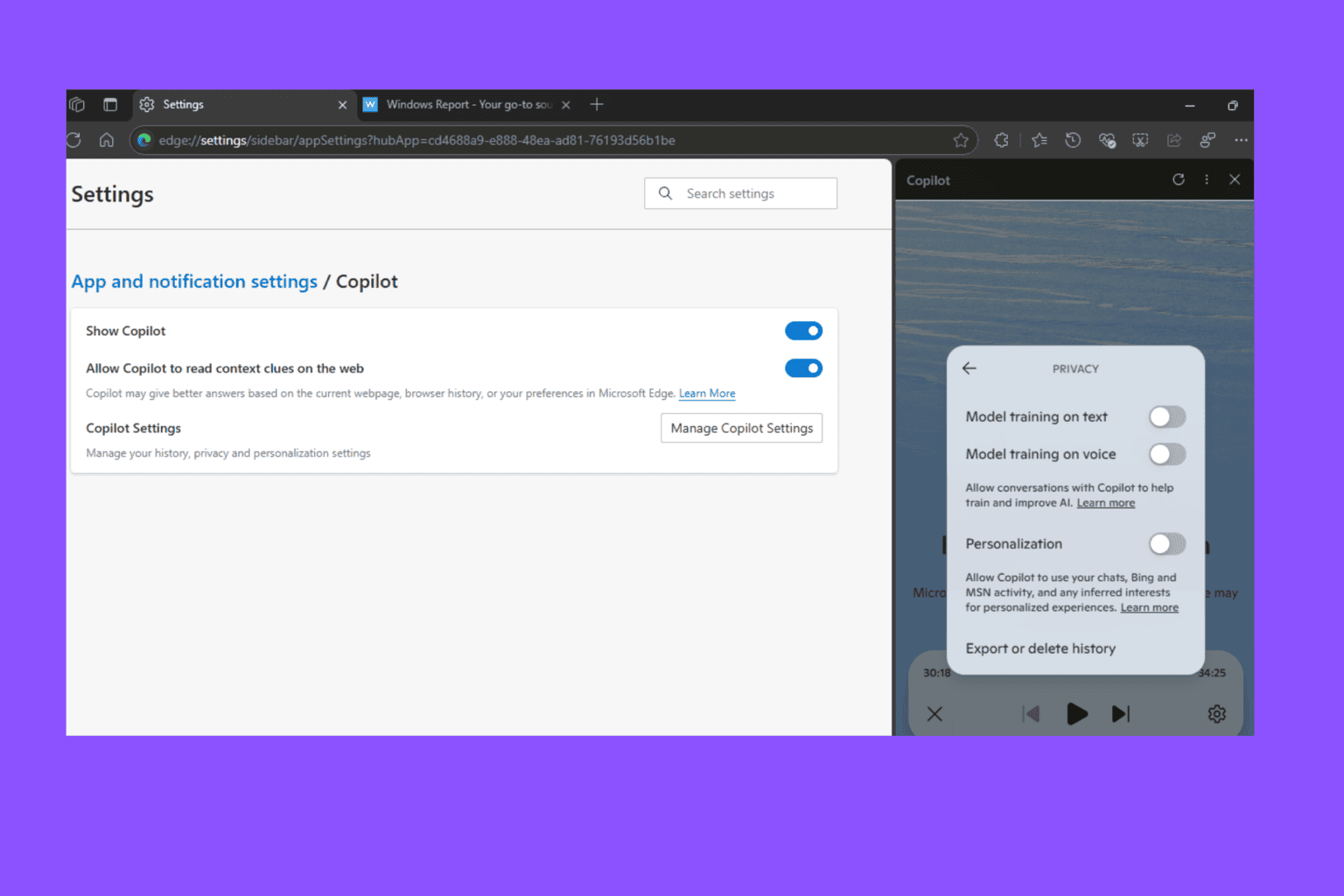

Microsoft even says that outside customers can access Azure OpenAI service and use the On Your Data feature. This would allow businesses and entities to access their in-house data using their AI applications. There’s another real-time tool for customers that can help detect how grounded the responses are from the AI chatbots.

Microsoft talks about another way to mitigate AI hallucinations in its blog post and says:

Microsoft is also developing a new mitigation feature to block and correct ungrounded instances in real-time. When a grounding error is detected, the feature will automatically rewrite the information based on the data.

There’s no release date committed for such tools as of now

All that said, we are still unsure when Microsoft will make these mitigation features available. But one thing is certain, Microsoft is aware of its AI models’ limitations. And, it is working to make them better by mitigating AI hallucinations as the day progresses.

Earlier this year, SalesForce also promised that its Einstein Copilot AI would encounter fewer hallucinations as compared to other AI chatbots. Time will tell when Microsoft comes up with a concrete tool to prevent AI hallucinations. But, the way it approaches the whole thing makes us quite optimistic.

User forum

0 messages