Microsoft's VASA-1 can generate dangerously accurate talking faces

The framework offers real time efficiency

3 min. read

Published on

Read our disclosure page to find out how can you help Windows Report sustain the editorial team Read more

Microsoft introduced a new framework known as VASA that can create lifelike talking faces. All it needs is a static image and an audio clip. Afterward, VASA-1, the first model, will generate a video featuring lip movements synchronized with the audio, facial expressions, and natural head motions.

Why is VASA-1 better than other video generators?

VASA-1 is a complex model which uses a disentangled face latent space. This feature allows it to store different expressions separately. As a result, it can recreate expressive facial dynamics based on various emotions. So, the videos generated by the Microsoft tool look more accurate than the ones generated through other means.

The tool has the potential to help us develop better avatars. In addition, we can use it in various other applications. For example, @Wisemasters on TikTok uses a similar technology on historical faces for educational purposes. Unfortunately, they look out of place and stiff compared to the ones generated by VASA-1.

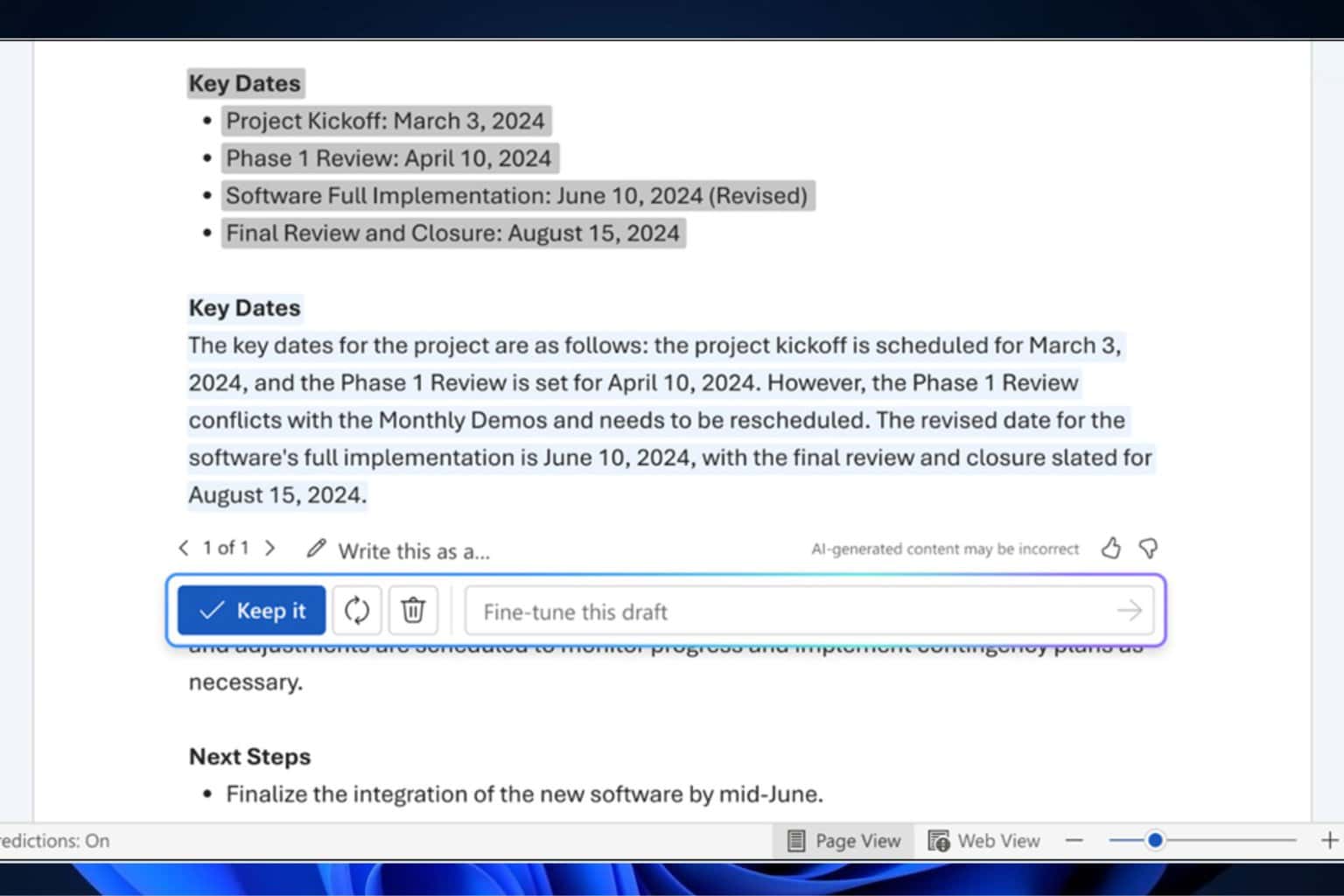

The Microsoft VASA-1 framework allows you to gain control over some aspects. For example, you can set the eye gaze location, adjust the head distance, and use emotional offsets. So, you can make the generated face appear angry or happy, making it more credible.

In addition, the Microsoft video generation tool can handle artistic photos, singing, and non-English speech. However, researchers didn’t train the model to do that. So, the fact that it can handle unexpected situations was a surprise. Below you can find an example featuring the Mona Lisa.

Disentanglement plays a great role in the video generation capabilities of the VASA-1 framework. After all, it allows you to control and edit multiple video aspects because it separates them. For instance, you can tweak the 3D head position, facial features, and expressions.

Researchers claim that the framework can offer real-time efficiency because its offline batch processing mode can generate 512×512 videos at 45 FPS. Additionally, it can support 40 FPS in the online streaming mode with a 170ms latency.

Is the VASA framework a potential threat?

Unfortunately, the tool comes with risks. The X user @EyeingAI calls it alarmingly realistic. After all, someone could use your photo and an AI-generated audio featuring your voice for ill intentions. Additionally, hackers could use the model for cyber attacks and to spread misinformation and fake news.

In a nutshell, the VASA-1 framework has surprising capabilities and exceeds other video generators. In addition, you can make the videos more accurate by using its control options. Future models could help us create better avatars. However, there are many risks, and Microsoft should prioritize minimizing the damage the tool can do.

What do you think? Are you eager to try VASA-1? Let us know in the comments.