The largest powerful language model trained by Microsoft and NVIDIA

2 min. read

Published on

Read our disclosure page to find out how can you help Windows Report sustain the editorial team Read more

Key notes

- A collaboration between Microsoft and NVIDIA has led to the birth of the largest most powerful AI-powered language today.

- The two companies have worked on numerous innovations before a breakthrough.

- The language is AI-powered and originates from a series of trials.

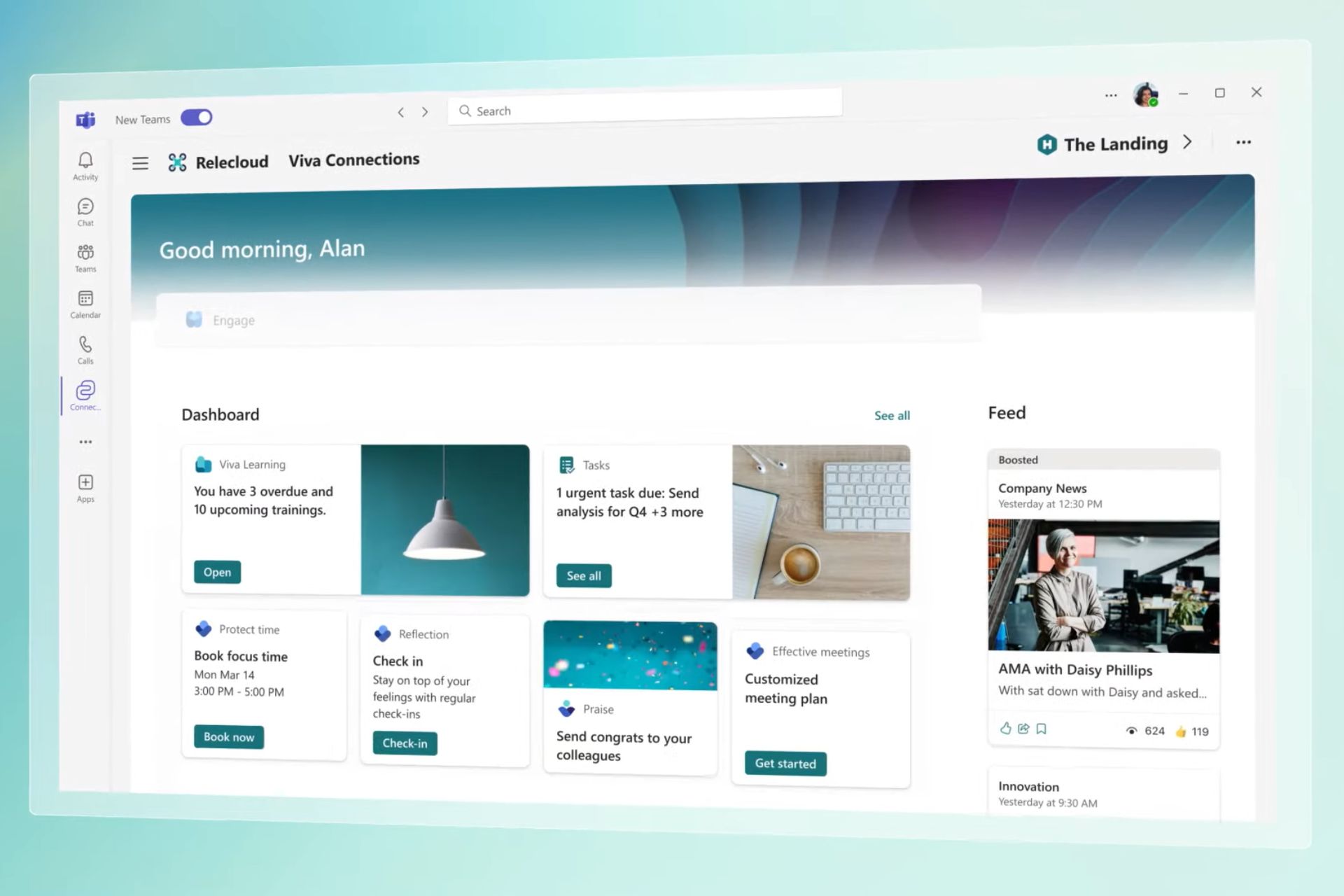

Microsoft and NVIDIA have today announced that they have successfully trained the largest most powerful language to date. The Megatron-Turing Natural Language Generation (MT-NLP) is meant to be the successor to the companies’ Turing NLG 17B and Megatron-LM models.

The MT-NLP has 530 billion parameters with the capability of a wide set of natural language tasks. According to the two companies, it also has comprehension, reasoning and natural language capabilities.

First breakthrough

The two companies have in the past worked on several innovations but this is considered the most powerful.

The quality obtained is a significant step towards the journey of unlocking AI in natural language. The two innovations DeepSpeed and Megatron-LM will be the major beneficiaries of the AI model development and open the pathway for large AI models to be affordable and faster to train.

Training

The training took place across 560 Nvidia DGX A100 servers, with 8 Nvidia A100 80GB GPUs for each.

Although the MT-NLP has the capability of inferring basic mathematical operations, it is not entirely accurate. It however surpasses memorization and can complete tasks.

Such models are crucial in amplifying the biases present in data in which they are trained.

Although Microsoft acknowledges there have been challenges, they are committed to addressing them by making continuous milestones through continued research while minimizing potential harm to users.

For now, users can enjoy the milestones made as we wait to see what is next in store.

What are your thoughts on the collaboration between Microsoft and NVIDIA? DO you have any expectations? Let us know in the comment section below.